Overview After spending quite a bit of time getting my Cisco VDSL router working with PPPoE I though others might benefit from an example configuration, …

Author: Tom

→Technology enthusiastic with many ongoing online projects one of which is this personal blog PingBin.

While also working full time within a data center designing and maintaining the network infrastructure.

ShellSock – Patching Unless you’ve been under a rock for the last few days you’ve probably heard about the new Bash exploit (CVE-2014-6271) ‘ShellShock’ that allows …

The Problem After the bash exploit ‘shellshock’ was released a few days ago I’ve been going around my servers and applying the required patches, however after …

Introduction. TL,DR; – Go to Installing Squid Yum is a great package manager for CentOS that is the secret envy of every Windows system administrator on …

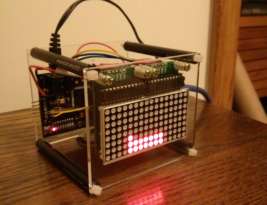

The home and small business routers these days that us geeks would be interested in buying are shipping with SNMP server functionality built in as …